Appearance

三维透视投影

前言

WARNING

前方高能预警!数学推导来袭!

在上一篇文章正交三维投影中,我们介绍了三维正交投影矩阵的推导过程,我可以简单的将其理解为是一种坐标的重映射的方法。换句话说,就是将在一个空间中的坐标映射到另一个空间中。

但是今天我们的话题稍微有一些复杂。让我们一起来看看透视投影到底是怎么要一回事吧!

什么是透视投影

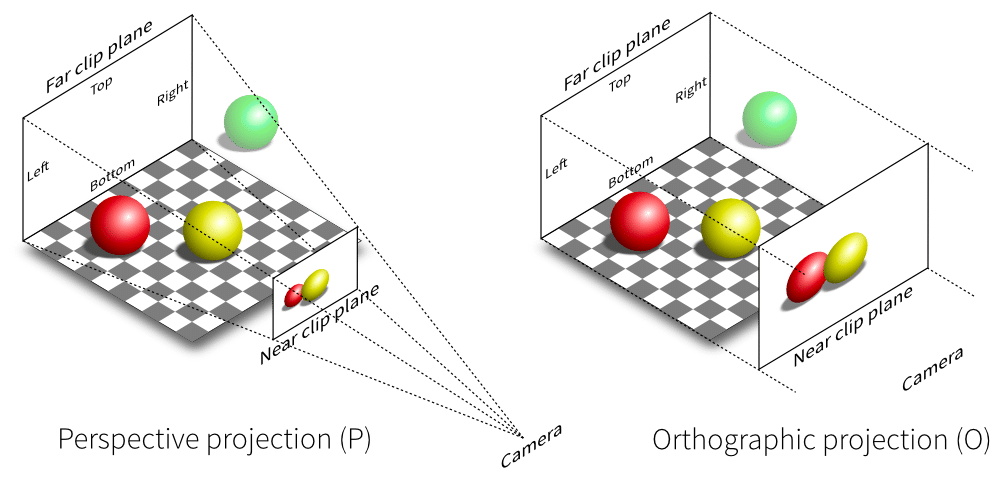

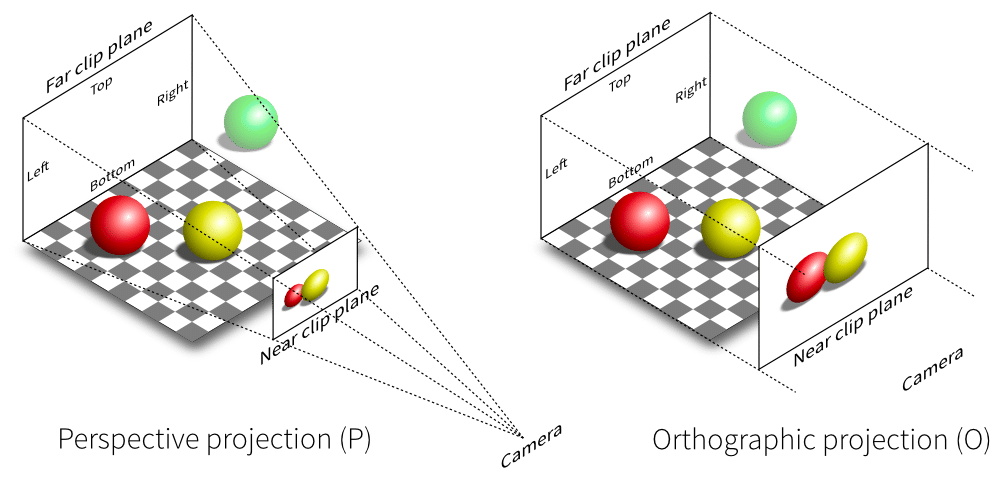

在我们观察这个世界时,有一种随处可见的现象:远处的景物看起来很小,离我们越近的物体看起来就越大。那么,我们要在 WebGL 中也模拟这种效果,这就是所谓的“透视投影”。下图很形象的展示了正交投影和透视投影的区别。

我们可以看出,在透视投影中,我们的观察空间不再是一个立方体,而是一个 “平截头体”,平截头体就是一个四面体被“削掉”了一部分形成的。比较小的部分被称为“近平面”,比较大的部分被称为“远平面”。

投影的过程就是将平截头体中的坐标“投影”到近平面上。那么投影的方向呢?投影的方向是朝着这个四面体的顶点。

建立透视投影矩阵

现在,我们开始着手于创建透视投影矩阵。当然你也可以直接使用 gl-matrix库中提供的方法。但是我希望你真的弄懂为什么是这样。

到目前为止我们还没有引入“场景图”或者说是“层级树/节点树”的概念(这一点我们会在后续的文章中提到)。所以截止目前,我们所有的坐标都是处于同一坐标系中。我们就把这个坐标系称之为“世界”。所以现在我们所有的坐标都是“世界坐标”。

但是我们的 GPU 中显示的确是 NDC(Normalized Device Coordinates)坐标。NDC 空间你可以理解为是各个坐标轴的范围都是 -1~1 之间的一个立方体。

那么如何将平截头体中的坐标映射到 NDC 空间中呢?

一共分为 2 步:

- 将平截头体中的坐标投影到近平面

- 将近平面上的坐标映射到 NDC 空间中(参考正交三维投影)

投影到近平面

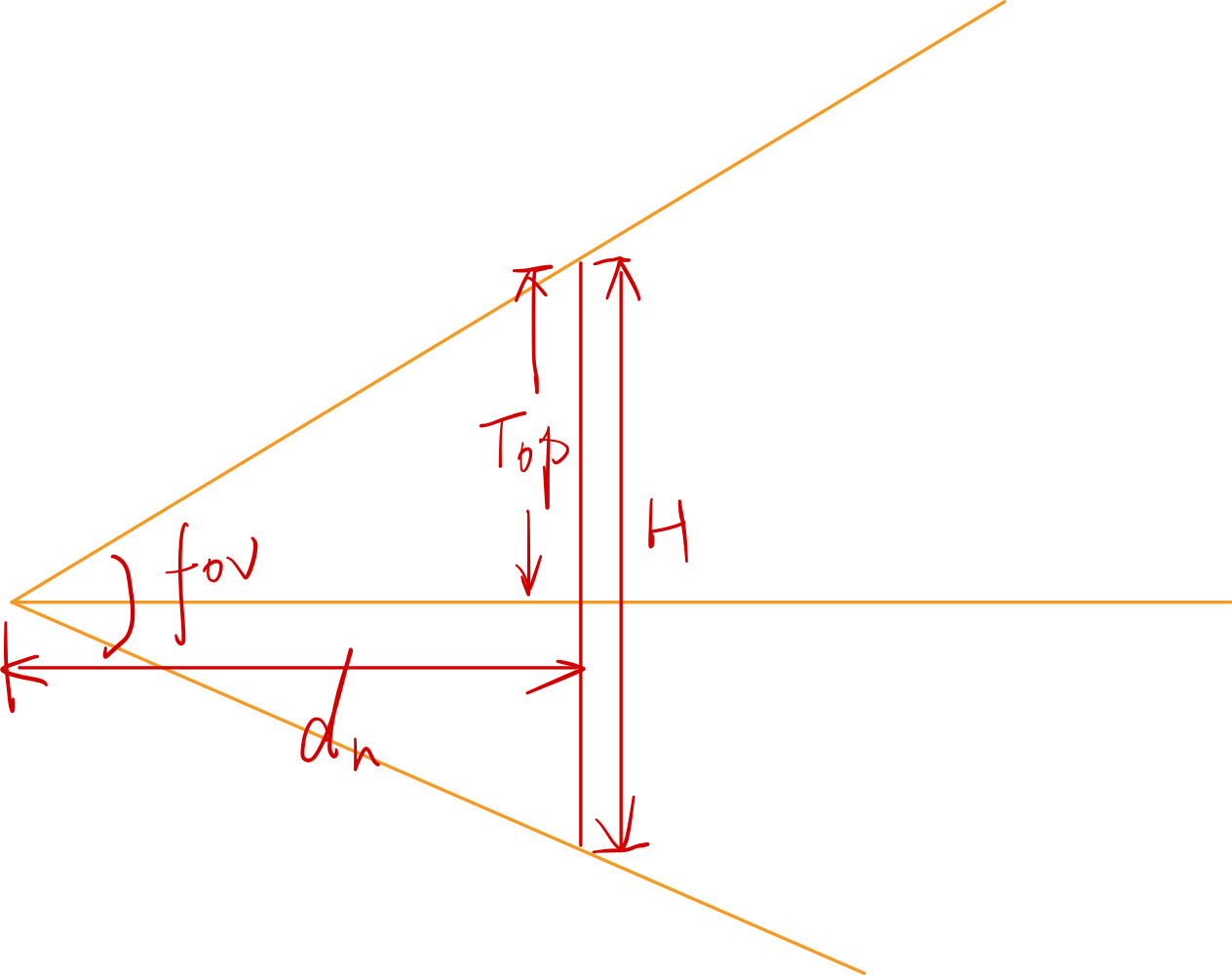

请仔细观察下图:

上图中的 表示平截头体中的任意一点, 与四面体顶点的连线与近平面的交点为 。

我们可以观察到图中的两个橙色阴影三角形是相似三角形。所以可以得出以下结论:

其中:表示的是近平面距离相机原点的距离,由于平截头体与我们的坐标系的 z 轴的方向相反,所以这里我们需要加上一个负号。

这里我们得到了投影后的 x' 和 y' 的坐标,根据齐次坐标的表示法,我们还可以将其写为:

此时,我们投影后的 z 坐标还未知,所以用 “?” 表示。

TIP

齐次坐标:我们引入一个 w 分量来表示齐次项。比如 A = (x, y, z, w),它等价于 A = (x/w, y/w, z/w),仅此而已。

现在我们需要构建一个矩阵,使其与 P 点坐标相乘后能得到上述结果。

那么 中的值到底如何?通过矩阵乘法的运算规则我们可以轻易的得出矩阵 应该是如下的形式:

上述矩阵中的 A、B,我们尚未明确。紧接着,我们思考这样的一件事情:当我们空间中的点 P 如果恰好位于近平面上时,我们投影后的 z 坐标也会保持不变,同理对于远平面上的点亦是如此。所以,我们可以根据矩阵乘法和上述规则得到:

通过解方程可以得到 A、B 的值:

所以,我们得到矩阵 为:

到这步为止,我们完成了将空间中的一点投影到近平面上,现在我们就可以采用类似于正交投影的方式,将近平面上的点映射到 [-1, 1] 区间中。

所以我们可以得到从相机近平面映射到[-1, 1]区间的矩阵为:

此处我们假设 Left = -Right, Bottom = -Top

由于 WebGL 是采用的左手坐标系,但是从习惯来说我们的世界空间通常使用的是右手坐标系,所以,我们还需要将其转换为左手坐标系,再将该矩阵与上面的矩阵 M 相乘,可以得到最后的结果:

但是,我们通常不使用近平面的宽 W 与高 H 来设置投影矩阵。我们通常使用竖直方向的视角(Field of View)与画面的长宽比(Aspect)来表示

所以 与 可以表示为:

我们的矩阵的最终形态为:

至此,透视投影矩阵推导完毕。

总结

如果你看到了这里,那么恭喜你,你几乎翻越了一座大山。胜利就在眼前了。我们接下来会介绍相机的部分。曙光就在眼前了。你可以在下面的 demo 和文末的代码中对你自己的代码进行校对。

TranslateX

TranslateY

TranslateZ

RotationZ

RotationY

RotationX

Scale

如果觉得本文有用,可以请作者喝杯咖啡~

ts

const canvas = document.getElementById('canvas2') as HTMLCanvasElement;

const gl = canvas.getContext('webgl');

if (!gl) {

return null;

}

// 设置清空颜色缓冲区时的颜色

gl.clearColor(1.0, 1.0, 1.0, 1.0);

// 清空颜色缓冲区

gl.clear(gl.COLOR_BUFFER_BIT);

// 顶点着色器

const vertexShader = `

attribute vec4 a_position;

attribute vec3 a_color;

uniform mat4 u_translate;

uniform mat4 u_rotate;

uniform mat4 u_scale;

uniform mat4 u_proj;

varying vec3 v_color;

void main () {

gl_Position = u_proj * u_translate * u_rotate * u_scale * a_position;

v_color = a_color;

}

`;

// 片元着色器

const fragmentShader = `

// 设置浮点数精度

precision mediump float;

varying vec3 v_color;

void main () {

// vec4是表示四维向量,这里用来表示RGBA的值[0~1],均为浮点数,如为整数则会报错

gl_FragColor = vec4(v_color, 1.0);

}

`;

// 初始化shader程序

const program = initWebGL(gl, vertexShader, fragmentShader);

if (!program) {

return null;

}

// 告诉WebGL使用我们刚刚初始化的这个程序

gl.useProgram(program);

gl.enable(gl.DEPTH_TEST);

const width = 50;

const height = 50;

const depth = 50;

//prettier-ignore

const pointPos = [

// front-face

0, 0, 0, width, 0, 0, width, height, 0, width, height, 0, 0, height, 0, 0, 0, 0,

// back-face

0, 0, depth, width, 0, depth, width, height, depth, width, height, depth, 0, height, depth, 0, 0, depth,

// left-face

0, 0, 0, 0, height, 0, 0, height, depth, 0, height, depth, 0, 0, depth, 0, 0, 0,

// right-face

width, 0, 0, width, height, 0, width, height, depth, width, height, depth, width, 0, depth, width, 0, 0,

// top-face

0, height, 0, width, height, 0, width, height, depth, width, height, depth, 0, height, depth, 0, height, 0,

// bottom-face

0, 0, 0, width, 0, 0, width, 0, depth, width, 0, depth, 0, 0, depth, 0, 0, 0,

];

for (let i = 0; i < pointPos.length; i += 3) {

pointPos[i] += -width / 2;

pointPos[i + 1] += -height / 2;

pointPos[i + 2] += -depth / 2;

}

//prettier-ignore

const colors = [

1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0,

1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0,

1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1,

0, 0.5, 1, 0, 0.5, 1, 0, 0.5, 1, 0, 0.5, 1, 0, 0.5, 1, 0, 0.5, 1,

0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1,

0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 1,

0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0, 0, 1, 0,

]

const buffer = gl.createBuffer();

gl.bindBuffer(gl.ARRAY_BUFFER, buffer);

gl.bufferData(gl.ARRAY_BUFFER, new Float32Array(pointPos), gl.STATIC_DRAW);

const colorBuffer = gl.createBuffer();

gl.bindBuffer(gl.ARRAY_BUFFER, colorBuffer);

gl.bufferData(gl.ARRAY_BUFFER, new Float32Array(colors), gl.STATIC_DRAW);

gl.bindBuffer(gl.ARRAY_BUFFER, buffer);

// 获取shader中a_position的地址

const a_position = gl.getAttribLocation(program, 'a_position');

// 我们不再采用这种方式进行传值

// gl.vertexAttrib3f(a_position, 0.0, 0.0, 0.0);

// 采用vertexAttribPointer进行传值

gl.vertexAttribPointer(

a_position,

3,

gl.FLOAT,

false,

Float32Array.BYTES_PER_ELEMENT * 3,

0

);

gl.enableVertexAttribArray(a_position);

gl.bindBuffer(gl.ARRAY_BUFFER, colorBuffer);

const a_color = gl.getAttribLocation(program, 'a_color');

// 我们不再采用这种方式进行传值

gl.vertexAttribPointer(

a_color,

3,

gl.FLOAT,

false,

Float32Array.BYTES_PER_ELEMENT * 3,

0

);

gl.enableVertexAttribArray(a_color);

// 我们需要往shader中传入矩阵

const uTranslateLoc = gl.getUniformLocation(program, 'u_translate'); //

const uRotateLoc = gl.getUniformLocation(program, 'u_rotate'); //

const uScaleLoc = gl.getUniformLocation(program, 'u_scale'); //

let translateX = 0; //

let translateY = 0; //

let translateZ = 0; //

let rotateRadian = 0; //

let scale = 1; //

let radianY = 1;

let radianX = 0;

const uProj = gl.getUniformLocation(program, 'u_proj');

const projMat = mat4.create();

mat4.perspective(projMat, 45, canvas.width / canvas.height, 1, 2000);

gl.uniformMatrix4fv(uProj, false, projMat);

const render = () => {

gl.useProgram(program);

gl.clear(gl.COLOR_BUFFER_BIT | gl.DEPTH_BUFFER_BIT); //

const translateMat = mat4.create(); //

const rotateMat = mat4.create(); //

const scaleMat = mat4.create(); //

mat4.translate(translateMat, translateMat, [

translateX,

translateY,

translateZ,

]); //

mat4.rotate(rotateMat, rotateMat, radianY, [0, 1, 0]);

mat4.rotate(rotateMat, rotateMat, rotateRadian, [0, 0, 1]);

mat4.rotate(rotateMat, rotateMat, radianX, [1, 0, 0]);

mat4.scale(scaleMat, scaleMat, [scale, scale, scale]); //

gl.uniformMatrix4fv(uTranslateLoc, false, translateMat); //

gl.uniformMatrix4fv(uRotateLoc, false, rotateMat); //

gl.uniformMatrix4fv(uScaleLoc, false, scaleMat); //

gl.drawArrays(gl.TRIANGLES, 0, pointPos.length / 3);

};

render();ts

import { mat4, vec3 } from 'gl-matrix';

import { Camera, Matrix4, Object3D, PerspectiveCamera, Vector3 } from 'three';

function createShader(gl: WebGLRenderingContext, type: number, source: string) {

// 创建 shader 对象

const shader = gl.createShader(type);

// 往 shader 中传入源代码

gl.shaderSource(shader!, source);

// 编译 shader

gl.compileShader(shader!);

// 判断 shader 是否编译成功

const success = gl.getShaderParameter(shader!, gl.COMPILE_STATUS);

if (success) {

return shader;

}

// 如果编译失败,则打印错误信息

console.log(gl.getShaderInfoLog(shader!));

gl.deleteShader(shader);

}

function createProgram(

gl: WebGLRenderingContext,

vertexShader: WebGLShader,

fragmentShader: WebGLShader

): WebGLProgram | null {

// 创建 program 对象

const program = gl.createProgram();

// 往 program 对象中传入 WebGLShader 对象

gl.attachShader(program!, vertexShader);

gl.attachShader(program!, fragmentShader);

// 链接 program

gl.linkProgram(program!);

// 判断 program 是否链接成功

const success = gl.getProgramParameter(program!, gl.LINK_STATUS);

if (success) {

return program;

}

// 如果 program 链接失败,则打印错误信息

console.log(gl.getProgramInfoLog(program!));

gl.deleteProgram(program);

return null;

}

export function initWebGL(

gl: RenderContext,

vertexSource: string,

fragmentSource: string

) {

// 根据源代码创建顶点着色器

const vertexShader = createShader(gl, gl.VERTEX_SHADER, vertexSource);

// 根据源代码创建片元着色器

const fragmentShader = createShader(gl, gl.FRAGMENT_SHADER, fragmentSource);

// 创建 WebGLProgram 程序

const program = createProgram(gl, vertexShader!, fragmentShader!);

return program;

}

export enum REPEAT_MODE {

NONE,

REPEAT,

MIRRORED_REPEAT,

}

export function createTexture(gl: WebGLRenderingContext, repeat?: REPEAT_MODE) {

const texture = gl.createTexture();

gl.bindTexture(gl.TEXTURE_2D, texture);

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MAG_FILTER, gl.LINEAR);

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MIN_FILTER, gl.LINEAR);

let mod: number = gl.CLAMP_TO_EDGE;

switch (repeat) {

case REPEAT_MODE.REPEAT:

mod = gl.REPEAT;

break;

case REPEAT_MODE.MIRRORED_REPEAT:

mod = gl.MIRRORED_REPEAT;

break;

}

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_WRAP_S, mod);

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_WRAP_T, mod);

return texture;

}

export function isMobile(): boolean {

if (typeof window !== 'undefined' && window.navigator) {

const userAgent = window.navigator.userAgent;

return /(mobile)/i.test(userAgent);

}

return false;

}

export function clamp(x: number, min: number, max: number) {

if (x < min) {

x = min;

} else if (x > max) {

x = max;

}

return x;

}

export function readLUTCube(file: string): {

size: number;

data: number[];

} {

let lineString = '';

let isStart = true;

let size = 0;

let i = 0;

let result: number[] = [];

const processToken = (token: string) => {

if (token === 'LUT size') {

i++;

let sizeStart = false;

let sizeStr = '';

while (file[i] !== '\n') {

if (file[i - 1] === ' ' && /\d/.test(file[i])) {

sizeStart = true;

sizeStr += file[i];

} else if (sizeStart) {

sizeStr += file[i];

}

i++;

}

size = +sizeStr;

result = new Array(size * size * size);

} else if (token === 'LUT data points') {

// 读取数据

i++;

let numStr = '';

let count = 0;

while (i < file.length) {

if (/\s|\n/.test(file[i])) {

result[count++] = +numStr;

numStr = '';

} else if (/\d|\./.test(file[i])) {

numStr += file[i];

}

i++;

}

}

};

for (; i < file.length; i++) {

if (file[i] === '#') {

isStart = true;

} else if (isStart && file[i] === '\n') {

processToken(lineString);

lineString = '';

isStart = false;

} else if (isStart) {

lineString += file[i];

}

}

return {

size,

data: result,

};

}

export async function loadImages(srcs: string[]): Promise<HTMLImageElement[]> {

const all: Promise<HTMLImageElement>[] = srcs.map(item => loadImage(item));

return Promise.all(all);

}

export async function loadImage(src: string) {

return new Promise<HTMLImageElement>(resolve => {

const img = new Image();

img.src = src;

img.onload = () => {

resolve(img);

};

});

}

export function compute8ssedt(image: ImageData): number[][] {

// Initialize distance transform image

const distImage: number[][] = [];

for (let i = 0; i < image.height; i++) {

distImage[i] = [];

for (let j = 0; j < image.width; j++) {

distImage[i][j] = 0;

}

}

// Initialize queue for distance transform

const queue: number[][] = [];

const data = image.data;

for (let i = 0; i < image.height; i++) {

for (let j = 0; j < image.width; j++) {

const index = (i * image.width + j) * 4;

if (data[index] == 255) {

queue.push([i, j]);

}

}

}

// Compute distance transform

while (queue.length > 0) {

const p = queue.shift()!;

const x = p[0];

const y = p[1];

let minDist = Number.MAX_SAFE_INTEGER;

let minDir = [-1, -1];

// Compute distance to nearest foreground pixel in 8 directions

for (let i = -1; i <= 1; i++) {

for (let j = -1; j <= 1; j++) {

if (i == 0 && j == 0) continue;

const nx = x + i;

const ny = y + j;

if (

nx >= 0 &&

nx < image.height &&

ny >= 0 &&

ny < image.width

) {

const d = distImage[nx][ny] + Math.sqrt(i * i + j * j);

if (d < minDist) {

minDist = d;

minDir = [i, j];

}

}

}

}

// Update distance transform image and queue

distImage[x][y] = minDist;

if (minDir[0] != -1 && minDir[1] != -1) {

const nx = x + minDir[0];

const ny = y + minDir[1];

if (distImage[nx][ny] == 0) {

queue.push([nx, ny]);

}

}

}

return distImage;

}

// #region createFramebuffer

export function createFramebufferAndTexture(

gl: WebGLRenderingContext,

width: number,

height: number

): [WebGLFramebuffer | null, WebGLTexture | null] {

const framebuffer = gl.createFramebuffer();

gl.bindFramebuffer(gl.FRAMEBUFFER, framebuffer);

const texture = createTexture(gl, REPEAT_MODE.NONE);

gl.bindTexture(gl.TEXTURE_2D, texture);

gl.texImage2D(

gl.TEXTURE_2D,

0,

gl.RGBA,

width,

height,

0,

gl.RGBA,

gl.UNSIGNED_BYTE,

null

);

gl.framebufferTexture2D(

gl.FRAMEBUFFER,

gl.COLOR_ATTACHMENT0,

gl.TEXTURE_2D,

texture,

0

);

const status = gl.checkFramebufferStatus(gl.FRAMEBUFFER);

if (status === gl.FRAMEBUFFER_COMPLETE) {

gl.bindFramebuffer(gl.FRAMEBUFFER, null);

gl.bindTexture(gl.TEXTURE_2D, null);

return [framebuffer, texture];

}

return [null, null];

}

// #endregion createFramebuffer

// #region lookat

export function lookAt(cameraPos: vec3, targetPos: vec3): mat4 {

const z = vec3.create();

const y = vec3.fromValues(0, 1, 0);

const x = vec3.create();

vec3.sub(z, cameraPos, targetPos);

vec3.normalize(z, z);

vec3.cross(x, y, z);

vec3.normalize(x, x);

vec3.cross(y, z, x);

vec3.normalize(y, y);

// prettier-ignore

return mat4.fromValues(

x[0], x[1], x[2], 0,

y[0], y[1], y[2], 0,

z[0], z[1], z[2], 0,

cameraPos[0], cameraPos[1], cameraPos[2], 1

);

}

// #endregion lookat

export function ASSERT(v: any) {

if (v === void 0 || v === null || isNaN(v)) {

throw new Error(v + 'is illegal value');

}

}

const lightAttenuationTable: Record<string, number[]> = {

'7': [1, 0.7, 1.8],

'13': [1, 0.35, 0.44],

'20': [1, 0.22, 0.2],

'32': [1, 0.14, 0.07],

'50': [1, 0.09, 0.032],

'65': [1, 0.07, 0.017],

'100': [1, 0.045, 0.0075],

'160': [1, 0.027, 0.0028],

'200': [1, 0.022, 0.0019],

'325': [1, 0.014, 0.0007],

'600': [1, 0.007, 0.0002],

'3250': [1, 0.0014, 0.000007],

};

// #region attenuation

export function lightAttenuationLookUp(dist: number): number[] {

const distKeys = Object.keys(lightAttenuationTable);

const first = +distKeys[0];

if (dist <= first) {

return lightAttenuationTable['7'];

}

for (let i = 0; i < distKeys.length - 1; i++) {

const key = distKeys[i];

const nextKey = distKeys[i + 1];

if (+key <= dist && dist < +nextKey) {

const value = lightAttenuationTable[key];

const nextValue = lightAttenuationTable[nextKey];

const k = (dist - +key) / (+nextKey - +key);

const kl = value[1] + (nextValue[1] - value[1]) * k;

const kq = value[2] + (nextValue[2] - value[2]) * k;

return [1, kl, kq];

}

}

return lightAttenuationTable['3250'];

}

// #endregion attenuation

// #region lesscode

export type BufferInfo = {

name: string;

buffer: WebGLBuffer;

numComponents: number;

isIndices?: boolean;

};

export function createBufferInfoFromArrays(

gl: RenderContext,

arrays: {

name: string;

numComponents: number;

data: Iterable<number>;

isIndices?: boolean;

}[]

): BufferInfo[] {

const result: BufferInfo[] = [];

for (let i = 0; i < arrays.length; i++) {

const buffer = gl.createBuffer();

if (!buffer) {

continue;

}

result.push({

name: arrays[i].name,

buffer: buffer,

numComponents: arrays[i].numComponents,

isIndices: arrays[i].isIndices,

});

if (arrays[i].isIndices) {

gl.bindBuffer(gl.ELEMENT_ARRAY_BUFFER, buffer);

gl.bufferData(

gl.ELEMENT_ARRAY_BUFFER,

new Uint32Array(arrays[i].data),

gl.STATIC_DRAW

);

} else {

gl.bindBuffer(gl.ARRAY_BUFFER, buffer);

gl.bufferData(

gl.ARRAY_BUFFER,

new Float32Array(arrays[i].data),

gl.STATIC_DRAW

);

}

}

return result;

}

export type AttributeSetters = Record<string, (bufferInfo: BufferInfo) => void>;

export function createAttributeSetter(

gl: RenderContext,

program: WebGLProgram

): AttributeSetters {

const createAttribSetter = (index: number) => {

return function (b: BufferInfo) {

if (!b.isIndices) {

gl.bindBuffer(gl.ARRAY_BUFFER, b.buffer);

gl.enableVertexAttribArray(index);

gl.vertexAttribPointer(

index,

b.numComponents,

gl.FLOAT,

false,

0,

0

);

}

};

};

const attribSetter: AttributeSetters = {};

const numAttribs = gl.getProgramParameter(program, gl.ACTIVE_ATTRIBUTES);

for (let i = 0; i < numAttribs; i++) {

const attribInfo = gl.getActiveAttrib(program, i);

if (!attribInfo) {

break;

}

const index = gl.getAttribLocation(program, attribInfo.name);

attribSetter[attribInfo.name] = createAttribSetter(index);

}

return attribSetter;

}

export type UniformSetters = Record<string, (v: any) => void>;

export function createUniformSetters(

gl: RenderContext,

program: WebGLProgram

): UniformSetters {

let textUnit = 0;

const createUniformSetter = (

program: WebGLProgram,

uniformInfo: {

name: string;

type: number;

}

): ((v: any) => void) => {

const location = gl.getUniformLocation(program, uniformInfo.name);

const type = uniformInfo.type;

if (type === gl.FLOAT) {

return function (v: number) {

gl.uniform1f(location, v);

};

} else if (type === gl.FLOAT_VEC2) {

return function (v: number[]) {

gl.uniform2fv(location, v);

};

} else if (type === gl.FLOAT_VEC3) {

return function (v: number[]) {

gl.uniform3fv(location, v);

};

} else if (type === gl.FLOAT_VEC4) {

return function (v: number[]) {

gl.uniform4fv(location, v);

};

} else if (type === gl.FLOAT_MAT2) {

return function (v: number[]) {

gl.uniformMatrix2fv(location, false, v);

};

} else if (type === gl.FLOAT_MAT3) {

return function (v: number[]) {

gl.uniformMatrix3fv(location, false, v);

};

} else if (type === gl.FLOAT_MAT4) {

return function (v: number[]) {

gl.uniformMatrix4fv(location, false, v);

};

} else if (type === gl.SAMPLER_2D) {

const currentTexUnit = textUnit;

++textUnit;

return function (v: WebGLTexture) {

gl.uniform1i(location, currentTexUnit);

gl.activeTexture(gl.TEXTURE0 + currentTexUnit);

gl.bindTexture(gl.TEXTURE_2D, v);

};

}

return function () {

throw new Error('cannot find corresponding type of value.');

};

};

const uniformsSetters: UniformSetters = {};

const numUniforms = gl.getProgramParameter(program, gl.ACTIVE_UNIFORMS);

for (let i = 0; i < numUniforms; i++) {

const uniformInfo = gl.getActiveUniform(program, i);

if (!uniformInfo) {

break;

}

let name = uniformInfo.name;

if (name.substr(-3) === '[0]') {

name = name.substr(0, name.length - 3);

}

uniformsSetters[uniformInfo.name] = createUniformSetter(

program,

uniformInfo

);

}

return uniformsSetters;

}

export function setAttribute(

attribSetters: AttributeSetters,

bufferInfos: BufferInfo[]

) {

for (let i = 0; i < bufferInfos.length; i++) {

const info = bufferInfos[i];

const setter = attribSetters[info.name];

setter && setter(info);

}

}

export function setUniform(

uniformSetters: UniformSetters,

uniforms: Record<string, any>

): void {

const keys = Object.keys(uniforms);

for (let i = 0; i < keys.length; i++) {

const key = keys[i];

const v = uniforms[key];

const setter = uniformSetters[key];

setter && setter(v);

}

}

// #endregion lesscode

export function fromViewUp(view: Vector3, up?: Vector3): Matrix4 {

up = up || new Vector3(0, 1, 0);

const xAxis = new Vector3().crossVectors(up, view);

xAxis.normalize();

const yAxis = new Vector3().crossVectors(view, xAxis);

yAxis.normalize();

// prettier-ignore

return new Matrix4(

xAxis.x, yAxis.x, view.x, 0,

xAxis.y, yAxis.y, view.y, 0,

xAxis.z, yAxis.z, view.z, 0,

0, 0, 0, 1

)

}

export function getMirrorPoint(

p: Vector3,

n: Vector3,

origin: Vector3

): Vector3 {

const op = p.clone().sub(origin);

const normalizedN = n.clone().normalize();

const d = op.dot(normalizedN);

const newP = op.sub(normalizedN.multiplyScalar(2 * d));

return newP;

}

export function getMirrorVector(p: Vector3, n: Vector3): Vector3 {

const normalizedN = n.clone().normalize();

const d = p.dot(normalizedN);

return normalizedN.multiplyScalar(2 * d).sub(p);

}

export function setReflection2(

mainCamera: Camera,

virtualCamera: Camera,

reflector: Object3D

): void {

const reflectorWorldPosition = new Vector3();

const cameraWorldPosition = new Vector3();

reflectorWorldPosition.setFromMatrixPosition(reflector.matrixWorld);

cameraWorldPosition.setFromMatrixPosition(mainCamera.matrixWorld);

const rotationMatrix = new Matrix4();

rotationMatrix.extractRotation(reflector.matrixWorld);

const normal = new Vector3();

normal.set(0, 0, 1);

normal.applyMatrix4(rotationMatrix);

const view = new Vector3();

view.subVectors(reflectorWorldPosition, cameraWorldPosition);

view.reflect(normal).negate();

view.add(reflectorWorldPosition);

rotationMatrix.extractRotation(mainCamera.matrixWorld);

const lookAtPosition = new Vector3();

lookAtPosition.set(0, 0, -1);

lookAtPosition.applyMatrix4(rotationMatrix);

lookAtPosition.add(cameraWorldPosition);

const target = new Vector3();

target.subVectors(reflectorWorldPosition, lookAtPosition);

target.reflect(normal).negate();

target.add(reflectorWorldPosition);

virtualCamera.position.copy(view);

virtualCamera.position.copy(view);

virtualCamera.up.set(0, 1, 0);

virtualCamera.up.applyMatrix4(rotationMatrix);

virtualCamera.up.reflect(normal);

virtualCamera.isCamera = true;

virtualCamera.lookAt(target);

if (

virtualCamera instanceof PerspectiveCamera &&

mainCamera instanceof PerspectiveCamera

) {

virtualCamera.far = mainCamera.far; // Used in WebGLBackground

virtualCamera.updateMatrixWorld();

virtualCamera.projectionMatrix.copy(mainCamera.projectionMatrix);

} else {

// reflectCamera.updateMatrixWorld();

}

}